garage.envs.mujoco.half_cheetah_dir_env¶

Variant of the HalfCheetahEnv with different target directions.

-

class

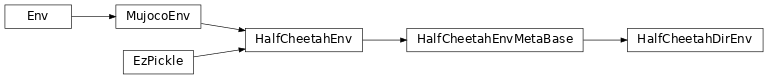

HalfCheetahDirEnv(task=None)¶ Bases:

garage.envs.mujoco.half_cheetah_env_meta_base.HalfCheetahEnvMetaBase

Half-cheetah environment with target direction, as described in [1].

The code is adapted from https://github.com/cbfinn/maml_rl/blob/9c8e2ebd741cb0c7b8bf2d040c4caeeb8e06cc95/rllab/envs/mujoco/half_cheetah_env_rand_direc.py

The half-cheetah follows the dynamics from MuJoCo [2], and receives at each time step a reward composed of a control cost and a reward equal to its velocity in the target direction. The tasks are generated by sampling the target directions from a Bernoulli distribution on {-1, 1} with parameter 0.5 (-1: backward, +1: forward).

- [1] Chelsea Finn, Pieter Abbeel, Sergey Levine, “Model-Agnostic

Meta-Learning for Fast Adaptation of Deep Networks”, 2017 (https://arxiv.org/abs/1703.03400)

- [2] Emanuel Todorov, Tom Erez, Yuval Tassa, “MuJoCo: A physics engine for

model-based control”, 2012 (https://homes.cs.washington.edu/~todorov/papers/TodorovIROS12.pdf)

-

metadata¶

-

reward_range¶

-

spec¶

-

action_space¶

-

observation_space¶

-

step(self, action)¶ Take one step in the environment.

Equivalent to step in HalfCheetahEnv, but with different rewards.

- Parameters

action (np.ndarray) – The action to take in the environment.

- Raises

ValueError – If the current direction is not 1.0 or -1.0.

- Returns

observation (np.ndarray): The observation of the environment.

reward (float): The reward acquired at this time step.

- done (boolean): Whether the environment was completed at this

time step. Always False for this environment.

- infos (dict):

- reward_forward (float): Reward for moving, ignoring the

control cost.

- reward_ctrl (float): The reward for acting i.e. the

control cost (always negative).

- task_dir (float): Target direction. 1.0 for forwards,

-1.0 for backwards.

- Return type

-

sample_tasks(self, num_tasks)¶ Sample a list of num_tasks tasks.

-

set_task(self, task)¶ Reset with a task.

-

viewer_setup(self)¶ Start the viewer.

-

reset_model(self)¶ Reset the robot degrees of freedom (qpos and qvel). Implement this in each subclass.

-

seed(self, seed=None)¶ Sets the seed for this env’s random number generator(s).

Note

Some environments use multiple pseudorandom number generators. We want to capture all such seeds used in order to ensure that there aren’t accidental correlations between multiple generators.

- Returns

- Returns the list of seeds used in this env’s random

number generators. The first value in the list should be the “main” seed, or the value which a reproducer should pass to ‘seed’. Often, the main seed equals the provided ‘seed’, but this won’t be true if seed=None, for example.

- Return type

list<bigint>

-

reset(self)¶ Resets the state of the environment and returns an initial observation.

- Returns

the initial observation.

- Return type

observation (object)

-

set_state(self, qpos, qvel)¶

-

property

dt(self)¶

-

do_simulation(self, ctrl, n_frames)¶

-

render(self, mode='human', width=DEFAULT_SIZE, height=DEFAULT_SIZE, camera_id=None, camera_name=None)¶ Renders the environment.

The set of supported modes varies per environment. (And some environments do not support rendering at all.) By convention, if mode is:

human: render to the current display or terminal and return nothing. Usually for human consumption.

rgb_array: Return an numpy.ndarray with shape (x, y, 3), representing RGB values for an x-by-y pixel image, suitable for turning into a video.

ansi: Return a string (str) or StringIO.StringIO containing a terminal-style text representation. The text can include newlines and ANSI escape sequences (e.g. for colors).

Note

- Make sure that your class’s metadata ‘render.modes’ key includes

the list of supported modes. It’s recommended to call super() in implementations to use the functionality of this method.

- Parameters

mode (str) – the mode to render with

Example:

- class MyEnv(Env):

metadata = {‘render.modes’: [‘human’, ‘rgb_array’]}

- def render(self, mode=’human’):

- if mode == ‘rgb_array’:

return np.array(…) # return RGB frame suitable for video

- elif mode == ‘human’:

… # pop up a window and render

- else:

super(MyEnv, self).render(mode=mode) # just raise an exception

-

close(self)¶ Override close in your subclass to perform any necessary cleanup.

Environments will automatically close() themselves when garbage collected or when the program exits.

-

get_body_com(self, body_name)¶

-

state_vector(self)¶

-

property

unwrapped(self)¶ Completely unwrap this env.

- Returns

The base non-wrapped gym.Env instance

- Return type

gym.Env