garage¶

Garage Base.

-

class

EpisodeBatch¶ Bases:

collections.namedtuple()

A tuple representing a batch of whole episodes.

Data type for on-policy algorithms.

A

EpisodeBatchrepresents a batch of whole episodes, produced when one or more agents interacts with one or more environments.Symbol

Description

\(N\)

Episode batch dimension

\([T]\)

Variable-length time dimension of each episode

\(S^*\)

Single-step shape of a time-series tensor

\(N \bullet [T]\)

A dimension computed by flattening a variable-length time dimension \([T]\) into a single batch dimension with length \(sum_{i \in N} [T]_i\)

-

episode_infos¶ A dict of numpy arrays containing the episode-level information of each episode. Each value of this dict should be a numpy array of shape \((N, S^*)\). For example, in goal-conditioned reinforcement learning this could contain the goal state for each episode.

-

observations¶ A numpy array of shape \((N \bullet [T], O^*)\) containing the (possibly multi-dimensional) observations for all time steps in this batch. These must conform to

EnvStep.observation_space.- Type

numpy.ndarray

-

last_observations¶ A numpy array of shape \((N, O^*)\) containing the last observation of each episode. This is necessary since there are one more observations than actions every episode.

- Type

numpy.ndarray

-

actions¶ A numpy array of shape \((N \bullet [T], A^*)\) containing the (possibly multi-dimensional) actions for all time steps in this batch. These must conform to

EnvStep.action_space.- Type

numpy.ndarray

-

rewards¶ A numpy array of shape \((N \bullet [T])\) containing the rewards for all time steps in this batch.

- Type

numpy.ndarray

-

env_infos¶ A dict of numpy arrays arbitrary environment state information. Each value of this dict should be a numpy array of shape \((N \bullet [T])\) or \((N \bullet [T], S^*)\).

-

agent_infos¶ A dict of numpy arrays arbitrary agent state information. Each value of this dict should be a numpy array of shape \((N \bullet [T])\) or \((N \bullet [T], S^*)\). For example, this may contain the hidden states from an RNN policy.

-

step_types¶ A numpy array of StepType with shape :math:`(N,) containing the time step types for all transitions in this batch.

- Type

numpy.ndarray

-

lengths¶ An integer numpy array of shape \((N,)\) containing the length of each episode in this batch. This may be used to reconstruct the individual episodes.

- Type

numpy.ndarray

- Raises

ValueError – If any of the above attributes do not conform to their prescribed types and shapes.

-

classmethod

concatenate(cls, *batches)¶ Create a EpisodeBatch by concatenating EpisodeBatches.

- Parameters

batches (list[EpisodeBatch]) – Batches to concatenate.

- Returns

The concatenation of the batches.

- Return type

-

split(self)¶ Split an EpisodeBatch into a list of EpisodeBatches.

The opposite of concatenate.

- Returns

- A list of EpisodeBatches, with one

episode per batch.

- Return type

-

to_list(self)¶ Convert the batch into a list of dictionaries.

- Returns

- Keys:

- observations (np.ndarray): Non-flattened array of

observations. Has shape (T, S^*) (the unflattened state space of the current environment). observations[i] was used by the agent to choose actions[i].

- next_observations (np.ndarray): Non-flattened array of

observations. Has shape (T, S^*). next_observations[i] was observed by the agent after taking actions[i].

- actions (np.ndarray): Non-flattened array of actions. Should

have shape (T, S^*) (the unflattened action space of the current environment).

- rewards (np.ndarray): Array of rewards of shape (T,) (1D

array of length timesteps).

- agent_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened agent_info arrays.

- env_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened env_info arrays.

- step_types (numpy.ndarray): A numpy array of `StepType with

shape (T,) containing the time step types for all transitions in this batch.

- episode_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened episode_info arrays.

- Return type

-

classmethod

from_list(cls, env_spec, paths)¶ Create a EpisodeBatch from a list of episodes.

- Parameters

env_spec (EnvSpec) – Specification for the environment from which this data was sampled.

paths (list[dict[str, np.ndarray or dict[str, np.ndarray]]]) –

Keys: * episode_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened episode_info arrays, each of shape (S^*).

- observations (np.ndarray): Non-flattened array of

observations. Typically has shape (T, S^*) (the unflattened state space of the current environment). observations[i] was used by the agent to choose actions[i]. observations may instead have shape (T + 1, S^*).

- next_observations (np.ndarray): Non-flattened array of

observations. Has shape (T, S^*). next_observations[i] was observed by the agent after taking actions[i]. Optional. Note that to ensure all information from the environment was preserved, observations[i] should have shape (T + 1, S^*), or this key should be set. However, this method is lenient and will “duplicate” the last observation if the original last observation has been lost.

- actions (np.ndarray): Non-flattened array of actions. Should

have shape (T, S^*) (the unflattened action space of the current environment).

- rewards (np.ndarray): Array of rewards of shape (T,) (1D

array of length timesteps).

- agent_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened agent_info arrays.

- env_infos (dict[str, np.ndarray]): Dictionary of stacked,

non-flattened env_info arrays.

- step_types (numpy.ndarray): A numpy array of `StepType with

shape (T,) containing the time step types for all transitions in this batch.

-

property

next_observations(self)¶ Get the observations seen after actions are performed.

Usually, in an

EpisodeBatch, next_observations don’t need to be stored explicitly, since the next observation is already stored in the batch.- Returns

The “next_observations”.

- Return type

np.ndarray

-

property

padded_observations(self)¶ Padded observations.

- Returns

- Padded observations with shape of

\((N, max_episode_length, O^*)\).

- Return type

np.ndarray

-

property

padded_actions(self)¶ Padded actions.

- Returns

- Padded actions with shape of

\((N, max_episode_length, A^*)\).

- Return type

np.ndarray

-

property

observations_list(self)¶ Split observations into a list.

- Returns

Splitted list.

- Return type

list[np.ndarray]

-

property

actions_list(self)¶ Split actions into a list.

- Returns

Splitted list.

- Return type

list[np.ndarray]

-

property

padded_rewards(self)¶ Padded rewards.

- Returns

- Padded rewards with shape of

\((N, max_episode_length)\).

- Return type

np.ndarray

-

property

valids(self)¶ An array indicating valid steps in a padded tensor.

- Returns

the shape is \((N, max_episode_length)\).

- Return type

np.ndarray

-

property

padded_agent_infos(self)¶ Padded agent infos.

-

pad_to_last(self, input_array)¶ Pad tensors with zeros.

- Parameters

input_array (np.ndarray) – Tensors to be padded.

- Returns

- Padded tensor with shape of

\((N, max_episode_length)\) or \((N, max_episode_length, S^*)\).

- Return type

numpy.ndarray

-

count()¶ Return number of occurrences of value.

-

index()¶ Return first index of value.

Raises ValueError if the value is not present.

-

-

class

InOutSpec(input_space, output_space)¶ Describes the input and output spaces of a primitive or module.

- Parameters

input_space (akro.Space) – Input space of a module.

output_space (akro.Space) – Output space of a module.

-

property

input_space(self)¶ Get input space of the module.

- Returns

Input space of the module.

- Return type

akro.Space

-

property

output_space(self)¶ Get output space of the module.

- Returns

Output space of the module.

- Return type

akro.Space

-

class

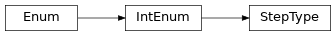

StepType¶ Bases:

enum.IntEnum

Defines the status of a

TimeStepwithin a sequence.Note that the last

TimeStepin a sequence can either be :attribute:`StepType.TERMINAL` or :attribute:`StepType.TIMEOUT`.Suppose max_episode_length = 5: * A success sequence terminated at step 4 will look like:

FIRST, MID, MID, TERMINAL

- A success sequence terminated at step 5 will look like:

FIRST, MID, MID, MID, TERMINAL

- An unsuccessful sequence truncated by time limit will look like:

FIRST, MID, MID, MID, TIMEOUT

-

class

denominator¶ the denominator of a rational number in lowest terms

-

class

imag¶ the imaginary part of a complex number

-

class

numerator¶ the numerator of a rational number in lowest terms

-

class

real¶ the real part of a complex number

-

FIRST= 0¶

-

MID= 1¶

-

TERMINAL= 2¶

-

TIMEOUT= 3¶

-

classmethod

get_step_type(cls, step_cnt, max_episode_length, done)¶ Determines the step type based on step cnt and done signal.

- Parameters

- Returns

the step type.

- Return type

- Raises

ValueError – if step_cnt is < 1. In this case a environment’s

reset()` is likely not called yet and the step_cnt is None –

-

bit_length()¶ Number of bits necessary to represent self in binary.

>>> bin(37) '0b100101' >>> (37).bit_length() 6

-

conjugate()¶ Returns self, the complex conjugate of any int.

-

to_bytes()¶ Return an array of bytes representing an integer.

- length

Length of bytes object to use. An OverflowError is raised if the integer is not representable with the given number of bytes.

- byteorder

The byte order used to represent the integer. If byteorder is ‘big’, the most significant byte is at the beginning of the byte array. If byteorder is ‘little’, the most significant byte is at the end of the byte array. To request the native byte order of the host system, use `sys.byteorder’ as the byte order value.

- signed

Determines whether two’s complement is used to represent the integer. If signed is False and a negative integer is given, an OverflowError is raised.

-

name(self)¶ The name of the Enum member.

-

value(self)¶ The value of the Enum member.

-

class

TimeStep¶ Bases:

collections.namedtuple()

A tuple representing a single TimeStep.

- A

TimeSteprepresents a single sample when an agent interacts with an environment. It describes as SARS (State–action–reward–state) tuple that characterizes the evolution of a MDP.

-

episode_info¶ A dict of numpy arrays of shape \((S*^,)\) containing episode-level information of each episode. For example, in goal-conditioned reinforcement learning this could contain the goal state for each episode.

-

observation¶ A numpy array of shape \((O^*)\) containing the observation for this time step in the environment. These must conform to

EnvStep.observation_space. The observation before applying the action. None if step_type is StepType.FIRST, i.e. at the start of a sequence.- Type

numpy.ndarray

-

action¶ A numpy array of shape \((A^*)\) containing the action for this time step. These must conform to

EnvStep.action_space. None if step_type is StepType.FIRST, i.e. at the start of a sequence.- Type

numpy.ndarray

-

reward¶ A float representing the reward for taking the action given the observation, at this time step. None if step_type is StepType.FIRST, i.e. at the start of a sequence.

- Type

-

next_observation¶ A numpy array of shape \((O^*)\) containing the observation for this time step in the environment. These must conform to

EnvStep.observation_space. The observation after applying the action.- Type

numpy.ndarray

-

agent_info¶ A dict of arbitrary agent state information. For example, this may contain the hidden states from an RNN policy.

- Type

-

step_type¶ a

StepTypeenum value. Can be one of :attribute:`~StepType.FIRST`, :attribute:`~StepType.MID`, :attribute:`~StepType.TERMINAL`, or :attribute:`~StepType.TIMEOUT`.- Type

-

property

first(self)¶ bool: Whether this step is the first of its episode.

-

property

mid(self)¶ bool: Whether this step is in the middle of its episode.

-

property

terminal(self)¶ bool: Whether this step records a termination condition.

-

property

timeout(self)¶ bool: Whether this step records a timeout condition.

-

property

last(self)¶ bool: Whether this step is the last of its episode.

-

classmethod

from_env_step(cls, env_step, last_observation, agent_info, episode_info)¶ Create a TimeStep from a EnvStep.

- Parameters

env_step (EnvStep) – the env step returned by the environment.

last_observation (numpy.ndarray) – A numpy array of shape \((O^*)\) containing the observation for this time step in the environment. These must conform to

EnvStep.observation_space. The observation before applying the action.agent_info (dict) – A dict of arbitrary agent state information.

- Returns

The TimeStep with all information of EnvStep plus the agent info.

- Return type

-

count()¶ Return number of occurrences of value.

-

index()¶ Return first index of value.

Raises ValueError if the value is not present.

- A

-

class

TimeStepBatch¶ Bases:

collections.namedtuple()

A tuple representing a batch of TimeSteps.

Data type for off-policy algorithms, imitation learning and batch-RL.

-

episode_infos¶ A dict of numpy arrays containing the episode-level information of each episode. Each value of this dict should be a numpy array of shape \((N, S^*)\). For example, in goal-conditioned reinforcement learning this could contain the goal state for each episode.

-

observations¶ Non-flattened array of observations. Typically has shape (batch_size, S^*) (the unflattened state space of the current environment).

- Type

numpy.ndarray

-

actions¶ Non-flattened array of actions. Should have shape (batch_size, S^*) (the unflattened action space of the current environment).

- Type

numpy.ndarray

-

rewards¶ Array of rewards of shape (batch_size, 1).

- Type

numpy.ndarray

-

next_observation¶ Non-flattened array of next observations. Has shape (batch_size, S^*). next_observations[i] was observed by the agent after taking actions[i].

- Type

numpy.ndarray

-

agent_infos¶ A dict of arbitrary agent state information. For example, this may contain the hidden states from an RNN policy.

- Type

-

step_types¶ A numpy array of `StepType with shape ( batch_size,) containing the time step types for all transitions in this batch.

- Type

numpy.ndarray

- Raises

ValueError – If any of the above attributes do not conform to their prescribed types and shapes.

-

classmethod

concatenate(cls, *batches)¶ Concatenate two or more :class:`TimeStepBatch`s.

- Parameters

batches (list[TimeStepBatch]) – Batches to concatenate.

- Returns

The concatenation of the batches.

- Return type

- Raises

ValueError – If no TimeStepBatches are provided.

-

split(self)¶ Split a

TimeStepBatchinto a list of :class:`~TimeStepBatch`s.The opposite of concatenate.

- Returns

- A list of :class:`TimeStepBatch`s, with one

TimeStepperTimeStepBatch.

- Return type

-

to_time_step_list(self)¶ Convert the batch into a list of dictionaries.

Breaks the

TimeStepBatchinto a list of single time step sample dictionaries. len(rewards) (or the number of discrete time step) dictionaries are returned- Returns

- Keys:

- episode_infos (dict[str, np.ndarray]): A dict of numpy arrays

containing the episode-level information of each episode. Each value of this dict should be a numpy array of shape \((S^*,)\). For example, in goal-conditioned reinforcement learning this could contain the goal state for each episode.

- observations (numpy.ndarray): Non-flattened array of

observations. Typically has shape (batch_size, S^*) (the unflattened state space of the current environment).

- actions (numpy.ndarray): Non-flattened array of actions. Should

have shape (batch_size, S^*) (the unflattened action space of the current environment).

- rewards (numpy.ndarray): Array of rewards of shape (

batch_size,) (1D array of length batch_size).

- next_observation (numpy.ndarray): Non-flattened array of next

observations. Has shape (batch_size, S^*). next_observations[i] was observed by the agent after taking actions[i].

- env_infos (dict): A dict arbitrary environment state

information.

- agent_infos (dict): A dict of arbitrary agent state

information. For example, this may contain the hidden states from an RNN policy.

- step_types (numpy.ndarray): A numpy array of `StepType with

shape (batch_size,) containing the time step types for all transitions in this batch.

- Return type

-

property

terminals(self)¶ Get an array of boolean indicating ternianal information.

-

classmethod

from_time_step_list(cls, env_spec, ts_samples)¶ Create a

TimeStepBatchfrom a list of time step dictionaries.- Parameters

env_spec (EnvSpec) – Specification for the environment from which this data was sampled.

ts_samples (list[dict[str, np.ndarray or dict[str, np.ndarray]]]) –

keys: * episode_infos (dict[str, np.ndarray]): A dict of numpy arrays

containing the episode-level information of each episode. Each value of this dict should be a numpy array of shape \((N, S^*)\). For example, in goal-conditioned reinforcement learning this could contain the goal state for each episode.

- observations (numpy.ndarray): Non-flattened array of

observations. Typically has shape (batch_size, S^*) (the unflattened state space of the current environment).

- actions (numpy.ndarray): Non-flattened array of actions.

Should have shape (batch_size, S^*) (the unflattened action space of the current environment).

- rewards (numpy.ndarray): Array of rewards of shape (

batch_size,) (1D array of length batch_size).

- next_observation (numpy.ndarray): Non-flattened array of next

observations. Has shape (batch_size, S^*). next_observations[i] was observed by the agent after taking actions[i].

- env_infos (dict): A dict arbitrary environment state

information.

- agent_infos (dict): A dict of arbitrary agent

state information. For example, this may contain the hidden states from an RNN policy.

step_types (numpy.ndarray): A numpy array of `StepType with

- shape (batch_size,) containing the time step types for all

transitions in this batch.

- Returns

The concatenation of samples.

- Return type

- Raises

ValueError – If no dicts are provided.

-

classmethod

from_episode_batch(cls, batch)¶ Construct a

TimeStepBatchfrom anEpisodeBatch.- Parameters

batch (EpisodeBatch) – Episode batch to convert.

- Returns

The converted batch.

- Return type

-

count()¶ Return number of occurrences of value.

-

index()¶ Return first index of value.

Raises ValueError if the value is not present.

-

-

log_multitask_performance(itr, batch, discount, name_map=None)¶ Log performance of episodes from multiple tasks.

- Parameters

itr (int) – Iteration number to be logged.

batch (EpisodeBatch) – Batch of episodes. The episodes should have either the “task_name” or “task_id” env_infos. If the “task_name” is not present, then name_map is required, and should map from task id’s to task names.

discount (float) – Discount used in computing returns.

name_map (dict[int, str] or None) – Mapping from task id’s to task names. Optional if the “task_name” environment info is present. Note that if provided, all tasks listed in this map will be logged, even if there are no episodes present for them.

- Returns

- Undiscounted returns averaged across all tasks. Has

shape \((N \bullet [T])\).

- Return type

numpy.ndarray

-

log_performance(itr, batch, discount, prefix='Evaluation')¶ Evaluate the performance of an algorithm on a batch of episodes.

- Parameters

itr (int) – Iteration number.

batch (EpisodeBatch) – The episodes to evaluate with.

discount (float) – Discount value, from algorithm’s property.

prefix (str) – Prefix to add to all logged keys.

- Returns

Undiscounted returns.

- Return type

numpy.ndarray

-

make_optimizer(optimizer_type, module=None, **kwargs)¶ Create an optimizer for pyTorch & tensorflow algos.

- Parameters

optimizer_type (Union[type, tuple[type, dict]]) – Type of optimizer. This can be an optimizer type such as ‘torch.optim.Adam’ or a tuple of type and dictionary, where dictionary contains arguments to initialize the optimizer e.g. (torch.optim.Adam, {‘lr’ : 1e-3})

module (optional) – If the optimizer type is a torch.optimizer. The torch.nn.Module module whose parameters needs to be optimized must be specify.

kwargs (dict) – Other keyword arguments to initialize optimizer. This is not used when optimizer_type is tuple.

- Returns

Constructed optimizer.

- Return type

torch.optim.Optimizer

- Raises

ValueError – Raises value error when optimizer_type is tuple, and non-default argument is passed in kwargs.

-

obtain_evaluation_episodes(policy, env, max_episode_length=1000, num_eps=100, deterministic=True)¶ Sample the policy for num_eps episodes and return average values.

- Parameters

policy (Policy) – Policy to use as the actor when gathering samples.

env (Environment) – The environement used to obtain episodes.

max_episode_length (int) – Maximum episode length. The episode will truncated when length of episode reaches max_episode_length.

num_eps (int) – Number of episodes.

deterministic (bool) – Whether the a deterministic approach is used in rollout.

- Returns

- Evaluation episodes, representing the best current

performance of the algorithm.

- Return type

-

rollout(env, agent, *, max_episode_length=np.inf, animated=False, pause_per_frame=None, deterministic=False)¶ Sample a single episode of the agent in the environment.

- Parameters

agent (Policy) – Policy used to select actions.

env (Environment) – Environment to perform actions in.

max_episode_length (int) – If the episode reaches this many timesteps, it is truncated.

animated (bool) – If true, render the environment after each step.

pause_per_frame (float) – Time to sleep between steps. Only relevant if animated == true.

deterministic (bool) – If true, use the mean action returned by the stochastic policy instead of sampling from the returned action distribution.

- Returns

- Dictionary, with keys:

- observations(np.array): Flattened array of observations.

There should be one more of these than actions. Note that observations[i] (for i < len(observations) - 1) was used by the agent to choose actions[i]. Should have shape \((T + 1, S^*)\), i.e. the unflattened observation space of

the current environment.

- actions(np.array): Non-flattened array of actions. Should have

shape \((T, S^*)\), i.e. the unflattened action space of the current environment.

- rewards(np.array): Array of rewards of shape \((T,)\), i.e. a

1D array of length timesteps.

- agent_infos(Dict[str, np.array]): Dictionary of stacked,

non-flattened agent_info arrays.

- env_infos(Dict[str, np.array]): Dictionary of stacked,

non-flattened env_info arrays.

dones(np.array): Array of termination signals.

- Return type

-

wrap_experiment(function=None, *, log_dir=None, prefix='experiment', name=None, snapshot_mode='last', snapshot_gap=1, archive_launch_repo=True, name_parameters=None, use_existing_dir=False, x_axis='TotalEnvSteps')¶ Decorate a function to turn it into an ExperimentTemplate.

When invoked, the wrapped function will receive an ExperimentContext, which will contain the log directory into which the experiment should log information.

This decorator can be invoked in two differed ways.

Without arguments, like this:

@wrap_experiment def my_experiment(ctxt, seed, lr=0.5):

…

Or with arguments:

@wrap_experiment(snapshot_mode=’all’) def my_experiment(ctxt, seed, lr=0.5):

…

All arguments must be keyword arguments.

- Parameters

function (callable or None) – The experiment function to wrap.

log_dir (str or None) – The full log directory to log to. Will be computed from name if omitted.

name (str or None) – The name of this experiment template. Will be filled from the wrapped function’s name if omitted.

prefix (str) – Directory under data/local in which to place the experiment directory.

snapshot_mode (str) – Policy for which snapshots to keep (or make at all). Can be either “all” (all iterations will be saved), “last” (only the last iteration will be saved), “gap” (every snapshot_gap iterations are saved), or “none” (do not save snapshots).

snapshot_gap (int) – Gap between snapshot iterations. Waits this number of iterations before taking another snapshot.

archive_launch_repo (bool) – Whether to save an archive of the repository containing the launcher script. This is a potentially expensive operation which is useful for ensuring reproducibility.

name_parameters (str or None) – Parameters to insert into the experiment name. Should be either None (the default), ‘all’ (all parameters will be used), or ‘passed’ (only passed parameters will be used). The used parameters will be inserted in the order they appear in the function definition.

use_existing_dir (bool) – If true, (re)use the directory for this experiment, even if it already contains data.

x_axis (str) – Key to use for x axis of plots.

- Returns

The wrapped function.

- Return type

callable

-

TFTrainer¶

-

class

Trainer(snapshot_config)¶ Base class of trainer.

Use trainer.setup(algo, env) to setup algorithm and environment for trainer and trainer.train() to start training.

- Parameters

snapshot_config (garage.experiment.SnapshotConfig) – The snapshot configuration used by Trainer to create the snapshotter. If None, it will create one with default settings.

Note

For the use of any TensorFlow environments, policies and algorithms, please use TFTrainer().

Examples

# to traintrainer = Trainer()env = Env(…)policy = Policy(…)algo = Algo(env=env,policy=policy,…)trainer.setup(algo, env)trainer.train(n_epochs=100, batch_size=4000)# to resume immediately.trainer = Trainer()trainer.restore(resume_from_dir)trainer.resume()# to resume with modified training arguments.trainer = Trainer()trainer.restore(resume_from_dir)trainer.resume(n_epochs=20)-

make_sampler(self, sampler_cls, *, seed=None, n_workers=psutil.cpu_count(logical=False), max_episode_length=None, worker_class=None, sampler_args=None, worker_args=None)¶ Construct a Sampler from a Sampler class.

- Parameters

sampler_cls (type) – The type of sampler to construct.

seed (int) – Seed to use in sampler workers.

max_episode_length (int) – Maximum episode length to be sampled by the sampler. Epsiodes longer than this will be truncated.

n_workers (int) – The number of workers the sampler should use.

worker_class (type) – Type of worker the Sampler should use.

sampler_args (dict or None) – Additional arguments that should be passed to the sampler.

worker_args (dict or None) – Additional arguments that should be passed to the sampler.

- Raises

ValueError – If max_episode_length isn’t passed and the algorithm doesn’t contain a max_episode_length field, or if the algorithm doesn’t have a policy field.

- Returns

An instance of the sampler class.

- Return type

sampler_cls

-

setup(self, algo, env, sampler_cls=None, sampler_args=None, n_workers=psutil.cpu_count(logical=False), worker_class=None, worker_args=None)¶ Set up trainer for algorithm and environment.

This method saves algo and env within trainer and creates a sampler.

Note

After setup() is called all variables in session should have been initialized. setup() respects existing values in session so policy weights can be loaded before setup().

- Parameters

algo (RLAlgorithm) – An algorithm instance.

env (Environment) – An environment instance.

sampler_cls (type) – A class which implements

Sampler.sampler_args (dict) – Arguments to be passed to sampler constructor.

n_workers (int) – The number of workers the sampler should use.

worker_class (type) – Type of worker the sampler should use.

worker_args (dict or None) – Additional arguments that should be passed to the worker.

- Raises

ValueError – If sampler_cls is passed and the algorithm doesn’t contain a max_episode_length field.

-

obtain_episodes(self, itr, batch_size=None, agent_update=None, env_update=None)¶ Obtain one batch of episodes.

- Parameters

itr (int) – Index of iteration (epoch).

batch_size (int) – Number of steps in batch. This is a hint that the sampler may or may not respect.

agent_update (object) – Value which will be passed into the agent_update_fn before doing sampling episodes. If a list is passed in, it must have length exactly factory.n_workers, and will be spread across the workers.

env_update (object) – Value which will be passed into the env_update_fn before sampling episodes. If a list is passed in, it must have length exactly factory.n_workers, and will be spread across the workers.

- Raises

ValueError – If the trainer was initialized without a sampler, or batch_size wasn’t provided here or to train.

- Returns

Batch of episodes.

- Return type

-

obtain_samples(self, itr, batch_size=None, agent_update=None, env_update=None)¶ Obtain one batch of samples.

- Parameters

itr (int) – Index of iteration (epoch).

batch_size (int) – Number of steps in batch. This is a hint that the sampler may or may not respect.

agent_update (object) – Value which will be passed into the agent_update_fn before sampling episodes. If a list is passed in, it must have length exactly factory.n_workers, and will be spread across the workers.

env_update (object) – Value which will be passed into the env_update_fn before sampling episodes. If a list is passed in, it must have length exactly factory.n_workers, and will be spread across the workers.

- Raises

ValueError – Raised if the trainer was initialized without a sampler, or batch_size wasn’t provided here or to train.

- Returns

One batch of samples.

- Return type

-

save(self, epoch)¶ Save snapshot of current batch.

- Parameters

epoch (int) – Epoch.

- Raises

NotSetupError – if save() is called before the trainer is set up.

-

restore(self, from_dir, from_epoch='last')¶ Restore experiment from snapshot.

-

log_diagnostics(self, pause_for_plot=False)¶ Log diagnostics.

- Parameters

pause_for_plot (bool) – Pause for plot.

-

train(self, n_epochs, batch_size=None, plot=False, store_episodes=False, pause_for_plot=False)¶ Start training.

- Parameters

- Raises

NotSetupError – If train() is called before setup().

- Returns

The average return in last epoch cycle.

- Return type

-

step_epochs(self)¶ Step through each epoch.

This function returns a magic generator. When iterated through, this generator automatically performs services such as snapshotting and log management. It is used inside train() in each algorithm.

The generator initializes two variables: self.step_itr and self.step_episode. To use the generator, these two have to be updated manually in each epoch, as the example shows below.

- Yields

int – The next training epoch.

Examples

- for epoch in trainer.step_epochs():

trainer.step_episode = trainer.obtain_samples(…) self.train_once(…) trainer.step_itr += 1

-

resume(self, n_epochs=None, batch_size=None, plot=None, store_episodes=None, pause_for_plot=None)¶ Resume from restored experiment.

This method provides the same interface as train().

If not specified, an argument will default to the saved arguments from the last call to train().

- Parameters

- Raises

NotSetupError – If resume() is called before restore().

- Returns

The average return in last epoch cycle.

- Return type

-

get_env_copy(self)¶ Get a copy of the environment.

- Returns

An environment instance.

- Return type