garage.torch.policies.gaussian_mlp_policy¶

GaussianMLPPolicy.

-

class

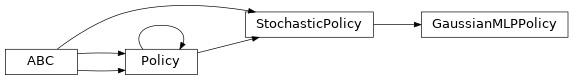

GaussianMLPPolicy(env_spec, hidden_sizes=(32, 32), hidden_nonlinearity=torch.tanh, hidden_w_init=nn.init.xavier_uniform_, hidden_b_init=nn.init.zeros_, output_nonlinearity=None, output_w_init=nn.init.xavier_uniform_, output_b_init=nn.init.zeros_, learn_std=True, init_std=1.0, min_std=1e-06, max_std=None, std_parameterization='exp', layer_normalization=False, name='GaussianMLPPolicy')¶ Bases:

garage.torch.policies.stochastic_policy.StochasticPolicy

MLP whose outputs are fed into a Normal distribution..

A policy that contains a MLP to make prediction based on a gaussian distribution.

- Parameters

env_spec (EnvSpec) – Environment specification.

hidden_sizes (list[int]) – Output dimension of dense layer(s) for the MLP for mean. For example, (32, 32) means the MLP consists of two hidden layers, each with 32 hidden units.

hidden_nonlinearity (callable) – Activation function for intermediate dense layer(s). It should return a torch.Tensor. Set it to None to maintain a linear activation.

hidden_w_init (callable) – Initializer function for the weight of intermediate dense layer(s). The function should return a torch.Tensor.

hidden_b_init (callable) – Initializer function for the bias of intermediate dense layer(s). The function should return a torch.Tensor.

output_nonlinearity (callable) – Activation function for output dense layer. It should return a torch.Tensor. Set it to None to maintain a linear activation.

output_w_init (callable) – Initializer function for the weight of output dense layer(s). The function should return a torch.Tensor.

output_b_init (callable) – Initializer function for the bias of output dense layer(s). The function should return a torch.Tensor.

learn_std (bool) – Is std trainable.

init_std (float) – Initial value for std. (plain value - not log or exponentiated).

min_std (float) – Minimum value for std.

max_std (float) – Maximum value for std.

std_parameterization (str) –

How the std should be parametrized. There are two options: - exp: the logarithm of the std will be stored, and applied a

exponential transformation

softplus: the std will be computed as log(1+exp(x))

layer_normalization (bool) – Bool for using layer normalization or not.

name (str) – Name of policy.

-

forward(self, observations)¶ Compute the action distributions from the observations.

- Parameters

observations (torch.Tensor) – Batch of observations on default torch device.

- Returns

Batch distribution of actions. dict[str, torch.Tensor]: Additional agent_info, as torch Tensors

- Return type

torch.distributions.Distribution

-

get_action(self, observation)¶ Get a single action given an observation.

- Parameters

observation (np.ndarray) – Observation from the environment. Shape is \(env_spec.observation_space\).

- Returns

- np.ndarray: Predicted action. Shape is

\(env_spec.action_space\).

- dict:

np.ndarray[float]: Mean of the distribution

- np.ndarray[float]: Standard deviation of logarithmic

values of the distribution.

- Return type

-

get_actions(self, observations)¶ Get actions given observations.

- Parameters

observations (np.ndarray) – Observations from the environment. Shape is \(batch_dim \bullet env_spec.observation_space\).

- Returns

- np.ndarray: Predicted actions.

\(batch_dim \bullet env_spec.action_space\).

- dict:

np.ndarray[float]: Mean of the distribution.

- np.ndarray[float]: Standard deviation of logarithmic

values of the distribution.

- Return type

-

get_param_values(self)¶ Get the parameters to the policy.

This method is included to ensure consistency with TF policies.

- Returns

The parameters (in the form of the state dictionary).

- Return type

-

set_param_values(self, state_dict)¶ Set the parameters to the policy.

This method is included to ensure consistency with TF policies.

- Parameters

state_dict (dict) – State dictionary.

-

reset(self, do_resets=None)¶ Reset the policy.

This is effective only to recurrent policies.

do_resets is an array of boolean indicating which internal states to be reset. The length of do_resets should be equal to the length of inputs, i.e. batch size.

- Parameters

do_resets (numpy.ndarray) – Bool array indicating which states to be reset.

-

property

env_spec(self)¶ Policy environment specification.

- Returns

Environment specification.

- Return type

garage.EnvSpec

-

property

observation_space(self)¶ Observation space.

- Returns

The observation space of the environment.

- Return type

akro.Space

-

property

action_space(self)¶ Action space.

- Returns

The action space of the environment.

- Return type

akro.Space