garage.replay_buffer¶

Replay buffers.

The replay buffer primitives can be used for RL algorithms.

-

class

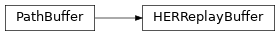

HERReplayBuffer(replay_k, reward_fn, capacity_in_transitions, env_spec)¶ Bases:

garage.replay_buffer.path_buffer.PathBuffer

Replay buffer for HER (Hindsight Experience Replay).

It constructs hindsight examples using future strategy.

Parameters: - replay_k (int) – Number of HER transitions to add for each regular Transition. Setting this to 0 means that no HER replays will be added.

- reward_fn (callable) – Function to re-compute the reward with substituted goals.

- capacity_in_transitions (int) – total size of transitions in the buffer.

- env_spec (EnvSpec) – Environment specification.

-

n_transitions_stored¶ Return the size of the replay buffer.

Returns: Size of the current replay buffer. Return type: int

-

add_path(self, path)¶ Adds a path to the replay buffer.

For each transition in the given path except the last one, replay_k HER transitions will added to the buffer in addition to the one in the path. The last transition is added without sampling additional HER goals.

Parameters: path (dict[str, np.ndarray]) – Each key in the dict must map to a np.ndarray of shape \((T, S^*)\).

-

add_episode_batch(self, episodes)¶ Add a EpisodeBatch to the buffer.

Parameters: episodes (EpisodeBatch) – Episodes to add.

-

sample_path(self)¶ Sample a single path from the buffer.

Returns: A dict of arrays of shape (path_len, flat_dim). Return type: path

-

sample_transitions(self, batch_size)¶ Sample a batch of transitions from the buffer.

Parameters: batch_size (int) – Number of transitions to sample. Returns: A dict of arrays of shape (batch_size, flat_dim). Return type: dict

-

clear(self)¶ Clear buffer.

-

class

PathBuffer(capacity_in_transitions)¶ A replay buffer that stores and can sample whole episodes.

This buffer only stores valid steps, and doesn’t require paths to have a maximum length.

Parameters: capacity_in_transitions (int) – Total memory allocated for the buffer. -

n_transitions_stored¶ Return the size of the replay buffer.

Returns: Size of the current replay buffer. Return type: int

-

add_episode_batch(self, episodes)¶ Add a EpisodeBatch to the buffer.

Parameters: episodes (EpisodeBatch) – Episodes to add.

-

add_path(self, path)¶ Add a path to the buffer.

Parameters: path (dict) – A dict of array of shape (path_len, flat_dim). Raises: ValueError– If a key is missing from path or path has wrong shape.

-

sample_path(self)¶ Sample a single path from the buffer.

Returns: A dict of arrays of shape (path_len, flat_dim). Return type: path

-

sample_transitions(self, batch_size)¶ Sample a batch of transitions from the buffer.

Parameters: batch_size (int) – Number of transitions to sample. Returns: A dict of arrays of shape (batch_size, flat_dim). Return type: dict

-

clear(self)¶ Clear buffer.

-

-

class

ReplayBuffer(env_spec, size_in_transitions, time_horizon)¶ Abstract class for Replay Buffer.

Parameters: -

full¶ Whether the buffer is full.

Returns: - True of the buffer has reachd its maximum size.

- False otherwise.

Return type: bool

-

n_transitions_stored¶ Return the size of the replay buffer.

Returns: Size of the current replay buffer. Return type: int

-

store_episode(self)¶ Add an episode to the buffer.

-

sample(self, batch_size)¶ Sample a transition of batch_size.

Parameters: batch_size (int) – The number of transitions to be sampled.

-

add_transition(self, **kwargs)¶ Add one transition into the replay buffer.

Parameters: kwargs (dict(str, [numpy.ndarray])) – Dictionary that holds the transitions.

-

add_transitions(self, **kwargs)¶ Add multiple transitions into the replay buffer.

A transition contains one or multiple entries, e.g. observation, action, reward, terminal and next_observation. The same entry of all the transitions are stacked, e.g. {‘observation’: [obs1, obs2, obs3]} where obs1 is one numpy.ndarray observation from the environment.

Parameters: kwargs (dict(str, [numpy.ndarray])) – Dictionary that holds the transitions.

-