garage.sampler.fragment_worker¶

Worker that “vectorizes” environments.

-

class

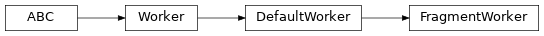

FragmentWorker(*, seed, max_episode_length, worker_number, n_envs=DEFAULT_N_ENVS, timesteps_per_call=1)[source]¶ Bases:

garage.sampler.default_worker.DefaultWorker

Vectorized Worker that collects partial episodes.

Useful for off-policy RL.

- Parameters

seed (int) – The seed to use to intialize random number generators.

max_episode_length (int or float) – The maximum length of episodes which will be sampled. Can be (floating point) infinity.

of fragments before they're transmitted out of (length) –

worker_number (int) – The number of the worker this update is occurring in. This argument is used to set a different seed for each worker.

n_envs (int) – Number of environment copies to use.

timesteps_per_call (int) – Maximum number of timesteps to gather per env per call to the worker. Defaults to 1 (i.e. gather 1 timestep per env each call, or n_envs timesteps in total each call).

-

DEFAULT_N_ENVS= 8¶

-

update_env(self, env_update)[source]¶ Update the environments.

If passed a list (inside this list passed to the Sampler itself), distributes the environments across the “vectorization” dimension.

- Parameters

env_update (Environment or EnvUpdate or None) – The environment to replace the existing env with. Note that other implementations of Worker may take different types for this parameter.

- Raises

TypeError – If env_update is not one of the documented types.

ValueError – If the wrong number of updates is passed.

-

step_episode(self)[source]¶ Take a single time-step in the current episode.

- Returns

True iff at least one of the episodes was completed.

- Return type

-

collect_episode(self)[source]¶ Gather fragments from all in-progress episodes.

- Returns

A batch of the episode fragments.

- Return type

-

rollout(self)[source]¶ Sample a single episode of the agent in the environment.

- Returns

The collected episode.

- Return type

-

worker_init(self)¶ Initialize a worker.

-

update_agent(self, agent_update)¶ Update an agent, assuming it implements

Policy.- Parameters

agent_update (np.ndarray or dict or Policy) – If a tuple, dict, or np.ndarray, these should be parameters to agent, which should have been generated by calling Policy.get_param_values. Alternatively, a policy itself. Note that other implementations of Worker may take different types for this parameter.