garage.tf.policies.gaussian_mlp_task_embedding_policy¶

GaussianMLPTaskEmbeddingPolicy.

-

class

GaussianMLPTaskEmbeddingPolicy(env_spec, encoder, name='GaussianMLPTaskEmbeddingPolicy', hidden_sizes=32, 32, hidden_nonlinearity=tf.nn.tanh, hidden_w_init=tf.initializers.glorot_uniform(seed=deterministic.get_tf_seed_stream()), hidden_b_init=tf.zeros_initializer(), output_nonlinearity=None, output_w_init=tf.initializers.glorot_uniform(seed=deterministic.get_tf_seed_stream()), output_b_init=tf.zeros_initializer(), learn_std=True, adaptive_std=False, std_share_network=False, init_std=1.0, min_std=1e-06, max_std=None, std_hidden_sizes=32, 32, std_hidden_nonlinearity=tf.nn.tanh, std_output_nonlinearity=None, std_parameterization='exp', layer_normalization=False)¶ Bases:

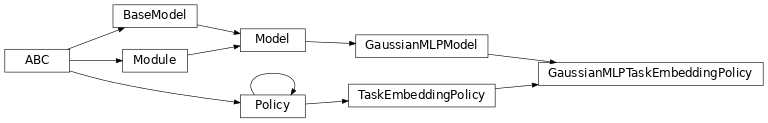

garage.tf.models.GaussianMLPModel,garage.tf.policies.task_embedding_policy.TaskEmbeddingPolicy

GaussianMLPTaskEmbeddingPolicy.

- Parameters

env_spec (garage.envs.env_spec.EnvSpec) – Environment specification.

encoder (garage.tf.embeddings.StochasticEncoder) – Embedding network.

name (str) – Model name, also the variable scope.

hidden_sizes (list[int]) – Output dimension of dense layer(s) for the MLP for mean. For example, (32, 32) means the MLP consists of two hidden layers, each with 32 hidden units.

hidden_nonlinearity (callable) – Activation function for intermediate dense layer(s). It should return a tf.Tensor. Set it to None to maintain a linear activation.

hidden_w_init (callable) – Initializer function for the weight of intermediate dense layer(s). The function should return a tf.Tensor.

hidden_b_init (callable) – Initializer function for the bias of intermediate dense layer(s). The function should return a tf.Tensor.

output_nonlinearity (callable) – Activation function for output dense layer. It should return a tf.Tensor. Set it to None to maintain a linear activation.

output_w_init (callable) – Initializer function for the weight of output dense layer(s). The function should return a tf.Tensor.

output_b_init (callable) – Initializer function for the bias of output dense layer(s). The function should return a tf.Tensor.

learn_std (bool) – Is std trainable.

adaptive_std (bool) – Is std a neural network. If False, it will be a parameter.

std_share_network (bool) – Boolean for whether mean and std share the same network.

init_std (float) – Initial value for std.

std_hidden_sizes (list[int]) – Output dimension of dense layer(s) for the MLP for std. For example, (32, 32) means the MLP consists of two hidden layers, each with 32 hidden units.

min_std (float) – If not None, the std is at least the value of min_std, to avoid numerical issues.

max_std (float) – If not None, the std is at most the value of max_std, to avoid numerical issues.

std_hidden_nonlinearity (callable) – Nonlinearity for each hidden layer in the std network. It should return a tf.Tensor. Set it to None to maintain a linear activation.

std_output_nonlinearity (callable) – Nonlinearity for output layer in the std network. It should return a tf.Tensor. Set it to None to maintain a linear activation.

std_parameterization (str) –

How the std should be parametrized. There are a few options: - exp: the logarithm of the std will be stored, and applied a

exponential transformation

softplus: the std will be computed as log(1+exp(x))

layer_normalization (bool) – Bool for using layer normalization or not.

-

build(self, obs_input, task_input, name=None)¶ Build policy.

- Parameters

obs_input (tf.Tensor) – Observation input.

task_input (tf.Tensor) – One-hot task id input.

name (str) – Name of the model, which is also the name scope.

- Returns

Policy network. namedtuple: Encoder network.

- Return type

namedtuple

-

get_action(self, observation)¶ Get action sampled from the policy.

- Parameters

observation (np.ndarray) – Augmented observation from the environment, with shape \((O+N, )\). O is the dimension of observation, N is the number of tasks.

- Returns

- Action sampled from the policy,

with shape \((A, )\). A is the dimension of action.

- dict: Action distribution information, with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((A, )\). A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((A, )\). A is the dimension of action.

- Return type

np.ndarray

-

get_actions(self, observations)¶ Get actions sampled from the policy.

- Parameters

observations (np.ndarray) – Augmented observation from the environment, with shape \((T, O+N)\). T is the number of environment steps, O is the dimension of observation, N is the number of tasks.

- Returns

- Actions sampled from the policy,

with shape \((T, A)\). T is the number of environment steps, A is the dimension of action.

- dict: Action distribution information, with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((T, A)\). T is the number of environment steps, A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((T, A)\). T is the number of environment steps, Z is the dimension of action.

- Return type

np.ndarray

-

get_action_given_latent(self, observation, latent)¶ Sample an action given observation and latent.

- Parameters

observation (np.ndarray) – Observation from the environment, with shape \((O, )\). O is the dimension of observation.

latent (np.ndarray) – Latent, with shape \((Z, )\). Z is the dimension of the latent embedding.

- Returns

- Action sampled from the policy,

with shape \((A, )\). A is the dimension of action.

- dict: Action distribution information, with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((A, )\). A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((A, )\). A is the dimension of action.

- Return type

np.ndarray

-

get_actions_given_latents(self, observations, latents)¶ Sample a batch of actions given observations and latents.

- Parameters

observations (np.ndarray) – Observations from the environment, with shape \((T, O)\). T is the number of environment steps, O is the dimension of observation.

latents (np.ndarray) – Latents, with shape \((T, Z)\). T is the number of environment steps, Z is the dimension of latent embedding.

- Returns

- Actions sampled from the policy,

with shape \((T, A)\). T is the number of environment steps, A is the dimension of action.

- dict: Action distribution information, , with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((T, A)\). T is the number of environment steps. A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((T, A)\). T is the number of environment steps. A is the dimension of action.

- Return type

np.ndarray

-

get_action_given_task(self, observation, task_id)¶ Sample an action given observation and task id.

- Parameters

observation (np.ndarray) – Observation from the environment, with shape \((O, )\). O is the dimension of the observation.

task_id (np.ndarray) – One-hot task id, with shape :math:`(N, ). N is the number of tasks.

- Returns

- Action sampled from the policy, with shape

\((A, )\). A is the dimension of action.

- dict: Action distribution information, with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((A, )\). A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((A, )\). A is the dimension of action.

- Return type

np.ndarray

-

get_actions_given_tasks(self, observations, task_ids)¶ Sample a batch of actions given observations and task ids.

- Parameters

observations (np.ndarray) – Observations from the environment, with shape \((T, O)\). T is the number of environment steps, O is the dimension of observation.

task_ids (np.ndarry) – One-hot task ids, with shape \((T, N)\). T is the number of environment steps, N is the number of tasks.

- Returns

- Actions sampled from the policy,

with shape \((T, A)\). T is the number of environment steps, A is the dimension of action.

- dict: Action distribution information, , with keys:

- mean (numpy.ndarray): Mean of the distribution,

with shape \((T, A)\). T is the number of environment steps. A is the dimension of action.

- log_std (numpy.ndarray): Log standard deviation of the

distribution, with shape \((T, A)\). T is the number of environment steps. A is the dimension of action.

- Return type

np.ndarray

-

get_trainable_vars(self)¶ Get trainable variables.

The trainable vars of a multitask policy should be the trainable vars of its model and the trainable vars of its embedding model.

- Returns

- A list of trainable variables in the current

variable scope.

- Return type

List[tf.Variable]

-

get_global_vars(self)¶ Get global variables.

The global vars of a multitask policy should be the global vars of its model and the trainable vars of its embedding model.

- Returns

- A list of global variables in the current

variable scope.

- Return type

List[tf.Variable]

-

property

env_spec(self)¶ Policy environment specification.

- Returns

Environment specification.

- Return type

garage.EnvSpec

-

property

encoder(self)¶ garage.tf.embeddings.encoder.Encoder: Encoder.

-

property

augmented_observation_space(self)¶ akro.Box: Concatenated observation space and one-hot task id.

-

clone(self, name)¶ Return a clone of the policy.

It copies the configuration of the primitive and also the parameters.

- Parameters

name (str) – Name of the newly created policy. It has to be different from source policy if cloned under the same computational graph.

- Returns

Cloned policy.

- Return type

-

network_output_spec(self)¶ Network output spec.

-

network_input_spec(self)¶ Network input spec.

-

property

parameters(self)¶ Parameters of the model.

- Returns

Parameters

- Return type

np.ndarray

-

property

name(self)¶ Name (str) of the model.

This is also the variable scope of the model.

- Returns

Name of the model.

- Return type

-

property

input(self)¶ Default input of the model.

When the model is built the first time, by default it creates the ‘default’ network. This property creates a reference to the input of the network.

- Returns

Default input of the model.

- Return type

tf.Tensor

-

property

output(self)¶ Default output of the model.

When the model is built the first time, by default it creates the ‘default’ network. This property creates a reference to the output of the network.

- Returns

Default output of the model.

- Return type

tf.Tensor

-

property

inputs(self)¶ Default inputs of the model.

When the model is built the first time, by default it creates the ‘default’ network. This property creates a reference to the inputs of the network.

- Returns

Default inputs of the model.

- Return type

list[tf.Tensor]

-

property

outputs(self)¶ Default outputs of the model.

When the model is built the first time, by default it creates the ‘default’ network. This property creates a reference to the outputs of the network.

- Returns

Default outputs of the model.

- Return type

list[tf.Tensor]

-

reset(self, do_resets=None)¶ Reset the module.

This is effective only to recurrent modules. do_resets is effective only to vectoried modules.

For a vectorized modules, do_resets is an array of boolean indicating which internal states to be reset. The length of do_resets should be equal to the length of inputs.

- Parameters

do_resets (numpy.ndarray) – Bool array indicating which states to be reset.

-

property

state_info_specs(self)¶ State info specification.

- Returns

- keys and shapes for the information related to the

module’s state when taking an action.

- Return type

List[str]

-

property

state_info_keys(self)¶ State info keys.

- Returns

- keys for the information related to the module’s state

when taking an input.

- Return type

List[str]

-

terminate(self)¶ Clean up operation.

-

get_regularizable_vars(self)¶ Get all network weight variables in the current scope.

- Returns

- A list of network weight variables in the

current variable scope.

- Return type

List[tf.Variable]

-

get_params(self)¶ Get the trainable variables.

- Returns

- A list of trainable variables in the current

variable scope.

- Return type

List[tf.Variable]

-

get_param_shapes(self)¶ Get parameter shapes.

- Returns

A list of variable shapes.

- Return type

List[tuple]

-

get_param_values(self)¶ Get param values.

- Returns

- Values of the parameters evaluated in

the current session

- Return type

np.ndarray

-

set_param_values(self, param_values)¶ Set param values.

- Parameters

param_values (np.ndarray) – A numpy array of parameter values.

-

flat_to_params(self, flattened_params)¶ Unflatten tensors according to their respective shapes.

- Parameters

flattened_params (np.ndarray) – A numpy array of flattened params.

- Returns

- A list of parameters reshaped to the

shapes specified.

- Return type

List[np.ndarray]

-

get_latent(self, task_id)¶ Get embedded task id in latent space.

- Parameters

task_id (np.ndarray) – One-hot task id, with shape \((N, )\). N is the number of tasks.

- Returns

- An embedding sampled from embedding distribution, with

shape \((Z, )\). Z is the dimension of the latent embedding.

dict: Embedding distribution information.

- Return type

np.ndarray

-

property

latent_space(self)¶ akro.Box: Space of latent.

-

property

task_space(self)¶ akro.Box: One-hot space of task id.

-

property

encoder_distribution(self)¶ tfp.Distribution.MultivariateNormalDiag: Encoder distribution.

-

split_augmented_observation(self, collated)¶ Splits up observation into one-hot task and environment observation.

- Parameters

collated (np.ndarray) – Environment observation concatenated with task one-hot, with shape \((O+N, )\). O is the dimension of observation, N is the number of tasks.

- Returns

- Vanilla environment observation,

with shape \((O, )\). O is the dimension of observation.

- np.ndarray: Task one-hot, with shape \((N, )\). N is the number

of tasks.

- Return type

np.ndarray

-

property

observation_space(self)¶ Observation space.

- Returns

The observation space of the environment.

- Return type

akro.Space

-

property

action_space(self)¶ Action space.

- Returns

The action space of the environment.

- Return type

akro.Space