garage.envs.task_onehot_wrapper¶

Wrapper for appending one-hot task encodings to individual task envs.

See ~TaskOnehotWrapper.wrap_env_list for the main way of using this module.

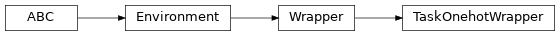

- class TaskOnehotWrapper(env, task_index, n_total_tasks)¶

Bases:

garage.Wrapper

Append a one-hot task representation to an environment.

See TaskOnehotWrapper.wrap_env_list for the recommended way of creating this class.

- Parameters

env (Environment) – The environment to wrap.

task_index (int) – The index of this task among the tasks.

n_total_tasks (int) – The number of total tasks.

- property observation_space¶

The observation space specification.

- Type

akro.Space

- property spec¶

Return the environment specification.

- Returns

The envionrment specification.

- Return type

- property action_space¶

The action space specification.

- Type

akro.Space

- property unwrapped¶

The inner environment.

- Type

- reset()¶

Sample new task and call reset on new task env.

- Returns

- The first observation conforming to

observation_space.

- dict: The episode-level information.

Note that this is not part of env_info provided in step(). It contains information of he entire episode, which could be needed to determine the first action (e.g. in the case of goal-conditioned or MTRL.)

- Return type

numpy.ndarray

- step(action)¶

Environment step for the active task env.

- Parameters

action (np.ndarray) – Action performed by the agent in the environment.

- Returns

The environment step resulting from the action.

- Return type

- classmethod wrap_env_list(envs)¶

Wrap a list of environments, giving each environment a one-hot.

This is the primary way of constructing instances of this class. It’s mostly useful when training multi-task algorithms using a multi-task aware sampler.

For example: ‘’’ .. code-block:: python

envs = get_mt10_envs() wrapped = TaskOnehotWrapper.wrap_env_list(envs) sampler = trainer.make_sampler(LocalSampler, env=wrapped)

‘’’

- Parameters

envs (list[Environment]) – List of environments to wrap. Note

the (that) – order these environments are passed in determines the value of their one-hot encoding. It is essential that this list is always in the same order, or the resulting encodings will be inconsistent.

- Returns

The wrapped environments.

- Return type

- classmethod wrap_env_cons_list(env_cons)¶

Wrap a list of environment constructors, giving each a one-hot.

This function is useful if you want to avoid constructing any environments in the main experiment process, and are using a multi-task aware remote sampler (i.e. ~RaySampler).

For example: ‘’’ .. code-block:: python

env_constructors = get_mt10_env_cons() wrapped = TaskOnehotWrapper.wrap_env_cons_list(env_constructors) env_updates = [NewEnvUpdate(wrapped_con)

for wrapped_con in wrapped]

sampler = trainer.make_sampler(RaySampler, env=env_updates)

‘’’

- Parameters

env_cons (list[Callable[Environment]]) – List of environment

constructor – to wrap. Note that the order these constructors are passed in determines the value of their one-hot encoding. It is essential that this list is always in the same order, or the resulting encodings will be inconsistent.

- Returns

The wrapped environments.

- Return type

list[Callable[TaskOnehotWrapper]]

- render(mode)¶

Render the wrapped environment.

- visualize()¶

Creates a visualization of the wrapped environment.

- close()¶

Close the wrapped env.