garage.tf.algos.rl2ppo¶

Proximal Policy Optimization for RL2.

- class RL2PPO(meta_batch_size, task_sampler, env_spec, policy, baseline, sampler, episodes_per_trial, scope=None, discount=0.99, gae_lambda=1, center_adv=True, positive_adv=False, fixed_horizon=False, lr_clip_range=0.01, max_kl_step=0.01, optimizer_args=None, policy_ent_coeff=0.0, use_softplus_entropy=False, use_neg_logli_entropy=False, stop_entropy_gradient=False, entropy_method='no_entropy', meta_evaluator=None, n_epochs_per_eval=10, name='PPO')¶

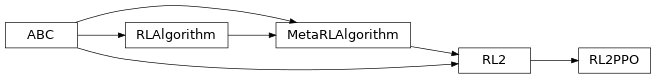

Bases:

garage.tf.algos.RL2

Proximal Policy Optimization specific for RL^2.

See https://arxiv.org/abs/1707.06347 for algorithm reference.

- Parameters

meta_batch_size (int) – Meta batch size.

task_sampler (TaskSampler) – Task sampler.

env_spec (EnvSpec) – Environment specification.

policy (garage.tf.policies.StochasticPolicy) – Policy.

baseline (garage.tf.baselines.Baseline) – The baseline.

sampler (garage.sampler.Sampler) – Sampler.

episodes_per_trial (int) – Used to calculate the max episode length for the inner algorithm.

scope (str) – Scope for identifying the algorithm. Must be specified if running multiple algorithms simultaneously, each using different environments and policies.

discount (float) – Discount.

gae_lambda (float) – Lambda used for generalized advantage estimation.

center_adv (bool) – Whether to rescale the advantages so that they have mean 0 and standard deviation 1.

positive_adv (bool) – Whether to shift the advantages so that they are always positive. When used in conjunction with center_adv the advantages will be standardized before shifting.

fixed_horizon (bool) – Whether to fix horizon.

lr_clip_range (float) – The limit on the likelihood ratio between policies, as in PPO.

max_kl_step (float) – The maximum KL divergence between old and new policies, as in TRPO.

optimizer_args (dict) – The arguments of the optimizer.

policy_ent_coeff (float) – The coefficient of the policy entropy. Setting it to zero would mean no entropy regularization.

use_softplus_entropy (bool) – Whether to estimate the softmax distribution of the entropy to prevent the entropy from being negative.

use_neg_logli_entropy (bool) – Whether to estimate the entropy as the negative log likelihood of the action.

stop_entropy_gradient (bool) – Whether to stop the entropy gradient.

entropy_method (str) – A string from: ‘max’, ‘regularized’, ‘no_entropy’. The type of entropy method to use. ‘max’ adds the dense entropy to the reward for each time step. ‘regularized’ adds the mean entropy to the surrogate objective. See https://arxiv.org/abs/1805.00909 for more details.

meta_evaluator (garage.experiment.MetaEvaluator) – Evaluator for meta-RL algorithms.

n_epochs_per_eval (int) – If meta_evaluator is passed, meta-evaluation will be performed every n_epochs_per_eval epochs.

name (str) – The name of the algorithm.

- train(trainer)¶

Obtain samplers and start actual training for each epoch.

- train_once(itr, episodes)¶

Perform one step of policy optimization given one batch of samples.

- Parameters

itr (int) – Iteration number.

episodes (EpisodeBatch) – Batch of episodes.

- Returns

Average return.

- Return type

numpy.float64

- get_exploration_policy()¶

Return a policy used before adaptation to a specific task.

Each time it is retrieved, this policy should only be evaluated in one task.

- Returns

- The policy used to obtain samples that are later used for

meta-RL adaptation.

- Return type

- adapt_policy(exploration_policy, exploration_episodes)¶

Produce a policy adapted for a task.

- Parameters

exploration_policy (Policy) – A policy which was returned from get_exploration_policy(), and which generated exploration_episodes by interacting with an environment. The caller may not use this object after passing it into this method.

exploration_episodes (EpisodeBatch) – episodes to adapt to, generated by exploration_policy exploring the environment.

- Returns

- A policy adapted to the task represented by the

exploration_episodes.

- Return type