garage.torch.policies.deterministic_mlp_policy¶

This modules creates a deterministic policy network.

A neural network can be used as policy method in different RL algorithms. It accepts an observation of the environment and predicts an action.

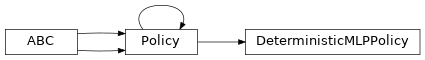

- class DeterministicMLPPolicy(env_spec, name='DeterministicMLPPolicy', **kwargs)¶

Bases:

garage.torch.policies.policy.Policy

Implements a deterministic policy network.

The policy network selects action based on the state of the environment. It uses a PyTorch neural network module to fit the function of pi(s).

- property env_spec¶

Policy environment specification.

- Returns

Environment specification.

- Return type

- property observation_space¶

Observation space.

- Returns

The observation space of the environment.

- Return type

akro.Space

- property action_space¶

Action space.

- Returns

The action space of the environment.

- Return type

akro.Space

- forward(observations)¶

Compute actions from the observations.

- Parameters

observations (torch.Tensor) – Batch of observations on default torch device.

- Returns

Batch of actions.

- Return type

torch.Tensor

- get_action(observation)¶

Get a single action given an observation.

- Parameters

observation (np.ndarray) – Observation from the environment.

- Returns

np.ndarray: Predicted action.

- dict:

np.ndarray[float]: Mean of the distribution

- np.ndarray[float]: Log of standard deviation of the

distribution

- Return type

- get_actions(observations)¶

Get actions given observations.

- Parameters

observations (np.ndarray) – Observations from the environment.

- Returns

np.ndarray: Predicted actions.

- dict:

np.ndarray[float]: Mean of the distribution

- np.ndarray[float]: Log of standard deviation of the

distribution

- Return type

- get_param_values()¶

Get the parameters to the policy.

This method is included to ensure consistency with TF policies.

- Returns

The parameters (in the form of the state dictionary).

- Return type

- set_param_values(state_dict)¶

Set the parameters to the policy.

This method is included to ensure consistency with TF policies.

- Parameters

state_dict (dict) – State dictionary.

- reset(do_resets=None)¶

Reset the policy.

This is effective only to recurrent policies.

do_resets is an array of boolean indicating which internal states to be reset. The length of do_resets should be equal to the length of inputs, i.e. batch size.

- Parameters

do_resets (numpy.ndarray) – Bool array indicating which states to be reset.